Finally its reality. What was considered as fiction, is part of our world now. The era of brain controlled robots has just begun. For details and video click

Finally its reality. What was considered as fiction, is part of our world now. The era of brain controlled robots has just begun. For details and video click

AFTER buttoning up a lab coat, snapping on surgical gloves and spraying them with alcohol, I am deemed sanitary enough to view a robot’s control system up close. Without such precautions, any fungal spores on my skin could infect it. “We’ve had that happen. They just stop working and die off,” says Mark Hammond, the system’s creator.

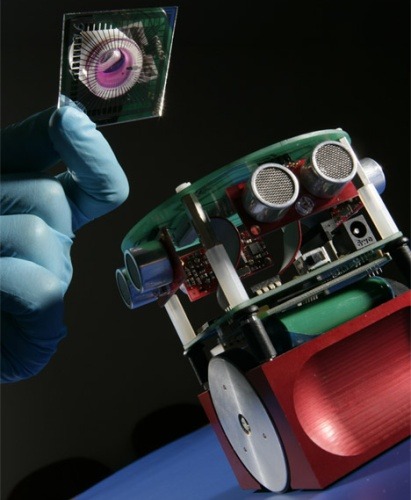

This is no ordinary robot control system – a plain old microchip connected to a circuit board. Instead, the controller nestles inside a small pot containing a pink broth of nutrients and antibiotics. Inside that pot, some 300,000 rat neurons have made – and continue to make – connections with each other.

As they do so, the disembodied neurons are communicating, sending electrical signals to one another just as they do in a living creature. We know this because the network of neurons is connected at the base of the pot to 80 electrodes, and the voltages sparked by the neurons are displayed on a computer screen.

It’s these spontaneous electrical patterns that researchers at the University of Reading in the UK want to harness to control a robot. If they can do so reliably, by stimulating the neurons with signals from sensors on the robot and using the neurons’ response to get the robots to respond, they hope to gain insights into how brains function. Such insights might help in the treatment of conditions like Alzheimer’s, Parkinson’s disease and epilepsy.

“We’re trying to understand what is going on inside this brain material that could have direct implications for human health,” says Kevin Warwick, Reading’s head of cybernetics, who is running the project with Hammond and Ben Whalley, both neuroscientists.

The team are far from alone in this aim. At a July conference on in-vitro recording technology in Reutlingen, Germany, teams from around the world presented projects on culturing brain material and plugging it into simulations and robots, or “animats” as they are known.

To create the “brain”, the neural cortex from a rat fetus is surgically removed and disassociating enzymes applied to it to disconnect the neurons from each other. The researchers then deposit a slim layer of these isolated neurons into a nutrient-rich medium on a bank of electrodes, where they start reconnecting. They do this by growing projections that reach out to touch the neighbouring neurons. “It’s just fascinating that they do this,” says Steve Potter of the Georgia Institute of Technology in Atlanta, who pioneered the field of neurally controlled animats. “Clearly brain cells have evolved to reconnect under almost any circumstance that doesn’t kill them.”

After about five days, patterns of electrical activity can be detected as the neurons transmit signals around what has become a very dense mesh of axons and dendrites. The neurons seem to be randomly firing, producing pulses of voltage known as action potentials. Often, though, many or all of them will fire in unison, a phenomenon known as “bursting”.

There are various views on what these bursts are. Some see them as pathological activity – akin to what happens in epilepsy – while others see them as the neural network expressing a stored memory. “I interpret them as seizure-like behaviour,” says Potter. “I think the bursting is a function of sensory deprivation.”

Like a creature with no limbs or senses, the cut-down brain is simply bursting out of boredom, says Whalley. “With no structured sensory input the hypothesis is that you get arbitrarily random and quite often detrimental activity because all these cells are asking for some kind of direction.”

To test this notion, Potter’s team “sprinkled” pulses of electricity across a number of contacts on the multi-electrode array (MEA), to simulate sensory inputs, and managed to significantly quell bursting activity. “It seems that sensory input is setting the background level of activity inside the brain,” says Potter.

These results have encouraged the researchers to begin investigating disease pathology with robots controlled by the cortical cultures. If they can make a robot do something repeatedly by sending signals to the culture, and then alter the brain chemically, electrically or physically to upset this controllability, they hope to be able to work out some causes and effects that throw light on disorders such as Alzheimer’s.

To do this, Whalley’s colleagues Dimitris Xydas and Julia Downes started by connecting a culture to an ultrasound sensor in a wheeled robot. They then record the spikes of voltage produced at points within the culture when signals from the sensor are sent to it. When they find an area that fires consistently when the sensor input reaches it, those signals can be picked up by an electrode and used to, say, make the robot avoid an obstruction. For example, if the ultrasound sensor indicates “wall dead ahead” with a 1 volt signal, and a certain knot of neurons in the culture always generates a 100-microvolt action potential when that happens, the latter signal can be used to make the robot steer right or left to avoid the wall.

To do this, of course, they need to connect their brain culture to the robot. Because it is living material, it needs to be kept at body temperature, so the control system is placed in a temperature-controlled cabinet the size of a microwave oven and communicates with the robot over a Bluetooth radio link.

The robot then whirrs around a wooden corral, and in about 80 per cent of its interactions with the walls manages to successfully avoid them. The researchers now plan to plot neural connections before and after such extended journeys to see if the connections are strengthening, says Downes.

At Georgia Tech, Potter has achieved similar results, getting his mobile robot to avoid obstacles 90 per cent of the time. He is hoping the research will help doctors to find ways to retrain or bypass malfunctioning neuronal circuits in people with epilepsy, and he is also starting work on Alzheimer’s.

The first step towards this, though, is to find a way to train the neurons into a more permanent state of reacting to sensor inputs at the right times. In a paper to be published in the Journal of Neural Engineering, Potter describes a novel training system for these mini brains.

What he has found is that a sequence of electric pulses applied to up to six electrodes on an MEA act as a kind of “mode switch” for the culture, changing its behaviour from being good at, say, steering a robot in a straight line to manoeuvring to avoid an obstacle. But because all cultures are different, he doesn’t know which pulse sequences will work best for each of them. So he randomly generates 100 different sequences – called pattern training stimuli – for each culture and lets a computer work out which ones produce the best neural connections to make a virtual robot move in a desired direction.

After the selected stimuli have been applied a few times, certain behaviours become embedded in the culture for some hours. In other words, the culture has been taught what to do. “It’s like training an animal to do something by gradual increments,” Potter says.

The Reading team are now planning to study whether particular parts of the culture, rather than all of it, can be more useful for performing certain tasks. They also plan to study whether the culture should be embodied in a robot early on. At the moment, they wait three to five weeks until a culture is mature before applying any sensory input. This might amount to trying to get a sensory-deprived “insane” culture to learn, says Whalley.

This work will hopefully contribute to our knowledge of how brains work, but its potential should not be exaggerated, says Potter. “This system is a model. Everything it does is merely similar to what goes on in a brain, it’s not really the same thing. We can learn about the brain – but it may mislead us.”

Warwick agrees, but believes it will be useful. “If this kind of work can make a 1 per cent difference to the life of an Alzheimer’s patient it will be worth it,” he says.